The Future of Software: Building Products with Privacy at the Core

6 min read

[ad_1]

Join top executives in San Francisco on July 11-12 and learn how business leaders are getting ahead of the generative AI revolution. Learn More

This article is part of a VB special issue. Read the full series here: Building the foundation for customer data quality.

Like cybersecurity, privacy often gets rushed into a product release instead of being integral to every platform refresh. And like cybersecurity DevOps and testing, which often gets bolted on at the end of a system development life cycle (SDLC), privacy too often reflects how rushed it’s been instead of being planned as a core part of each release.

The result is that the vision of what privacy could provide is not achieved, and a mediocre customer experience is delivered instead. Developers must make privacy an essential part of the SDLC if they are to deliver the full scope of what customers want regarding data integrity, quality and control.

“Privacy starts with account security. If a criminal can access your accounts, they have complete access to your life and your assets. FIDO Authentication, from the FIDO Alliance, protects accounts from phishing and other attacks,” Dennis Moore, CEO of Presidio Identity, told VentureBeat in a recent interview. Moore advises organizations “to really limit liability and protect customers, reduce the amount of data collected, improve data access policies to limit who can access data, use polymorphic encryption to protect data, and strengthen account security.”

Event

Transform 2023

Join us in San Francisco on July 11-12, where top executives will share how they have integrated and optimized AI investments for success and avoided common pitfalls.

Privacy needs to shift left in the SDLC

Getting privacy right must be a high priority in DevOps cycles, starting with integration into the SDLC. Baking in privacy early and taking a more shift-left mindset when creating new, innovative privacy safeguards and features must be the goal.

DJ Patil, mathematician and former U.S. chief data scientist, shared his insights on privacy in a LinkedIn Learning segment called “How can people fight for data privacy?” “If you’re a developer or designer, you have a responsibility,” Patil said. “[J]ust like someone who’s an architect of the ability to make sure that you’re building it (an app or system) in a responsible way, you have the responsibility to say, here’s how we should do it.” That responsibility includes treating customer data like it’s your own family’s data, according to Patil.

Privacy starts by giving users more control over their data

A leading indicator of how important control over their data is to users appeared when Apple released iOS 14.5. That release was the first to enforce a policy called app tracking transparency. iPhone, iPad and Apple TV apps were required to request users’ permission to use techniques like IDFA (I.D. for Advertisers) to track users’ activity across every app they used for data collection and ad targeting purposes. Nearly every user in the U.S., 96%, opted out of app tracking in iOS 14.5.

Worldwide, users want more control over their data than ever before, including the right to be forgotten, a central element of Europe’s General Data Protection Regulation (GDPR) and Brazil’s General Data Protection Law (LGPD). California was the first U.S. state to pass a data privacy law modeled after the GDPR. In 2020, the California Privacy Rights Act (CPRA) amended the California Consumer Privacy Act (CCPA) and included GDPR-like rights. On January 1, 2023, most CPRA provisions took effect, and on July 1, 2023, they will become enforceable.

The Utah Consumer Privacy Act (UCPA) takes effect on December 31, 2023. The UCPA is modeled after the Virginia Consumer Data Protection Act as well as consumer privacy laws in California and Colorado.

With GDPR, LGPD, CCPA and future laws going into effect to protect customers’ privacy, the seven foundational principles of Privacy by Design (PbD) as defined by former Ontario information and privacy commissioner Ann Cavoukian have served as guardrails to keep DevOps teams on track to integrating privacy into their development processes.

Privacy by engineering is the future

“Privacy by design is all about intention. What you really want is privacy by engineering,” Anshu Sharma, cofounder and CEO of Skyflow, told VentureBeat during a recent interview. “Or privacy by architecture. What that means is there is a specific way of building applications, data systems and technology, such that privacy is engineered in and is built right into your architecture.”

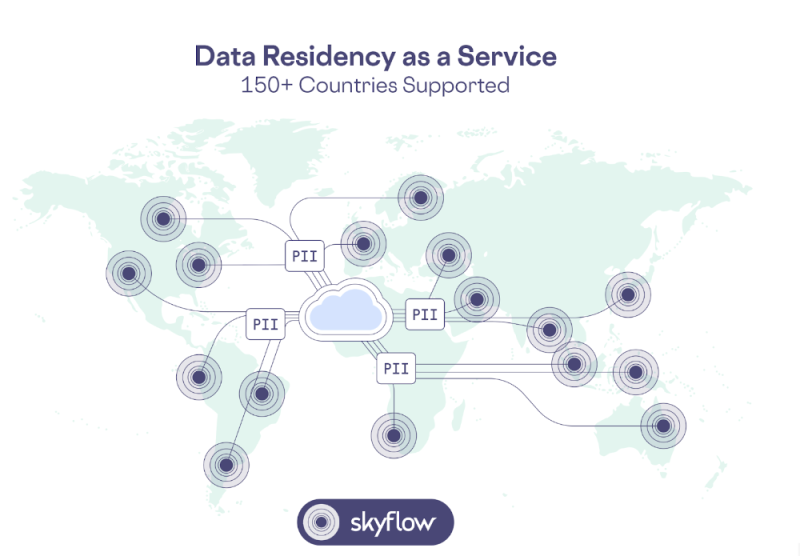

Skyflow is the leading provider of data privacy vaults. It counts among its customers IBM (drug discovery AI), Nomi Health (payments and patient data), Science37 (clinical trials) and many others.

Sharma referenced IEEE’s insightful article “Privacy Engineering,” which makes a compelling case for moving beyond the “by-design” phase of privacy to engineering privacy into the core architecture of infrastructure. “We think privacy by engineering is the next iteration of privacy by design,” Sharma said.

The IEEE article makes several excellent points about the importance of integrating privacy engineering into any technology provider’s SDLC processes. One of the most compelling is the cost of shortcomings in privacy engineering. For example, the article notes that European businesses were fined $1.2 billion in 2021 for violating GDPR privacy regulations. Fulfilling legal and policy mandates in a scalable platform requires privacy engineering in order to ensure any technologies being developed support the goals, direction and objectives of chief privacy officers (CPOs) and data protection officers (DPO).

Skyflow’s GPT Privacy Vault, launched last month, reflects Sharma’s and the Skyflow team’s commitment to privacy by engineering. “We ended up creating a new way of using encryption called polymorphic data encryption. You can actually keep this data encrypted while still using it,” Sharma said. The Skyflow GPT Privacy Vault gives enterprises granular data control over sensitive data throughout the lifecycle of large language models (LLMs) like GPT, ensuring that only authorized users can access specific datasets or functionalities in those systems.

Skyflow’s GPT Privacy Vault also supports data collection, model training, and redacted and anonymized interactions to maximize AI capabilities without compromising privacy. It enables global companies to use AI while meeting data residency requirements such as GDPR and LGPD throughout the global regions they are operating in today.

Five privacy questions organizations must ask themselves

“You have to engineer a system such that your social security number will never ever get into a large language model,” Sharma warns. “The right way to think about it is to architect your systems such that you minimize how much sensitive data makes its way into your systems.”

Sharma advises customers and the industry that there’s no “delete” button in LLMs, so once personal identifiable information (PII) is part of an LLM there’s no reversing the potential for damage. “If you don’t engineer it correctly, you’re never going to … unscramble the egg. Privacy can only decrease; it can’t be put back together.”

Sharma advises organizations to consider five questions when implementing privacy by engineering:

- Do you know how much PII data your organization has and how it’s managed today?

- Who has access to what PII data today across your organization and why?

- Where is the data stored?

- Which countries and locations have PII data, and can you differentiate by location what type of data is stored?

- Can you write and implement a policy and show that the policy is getting enforced?

Sharma observed that organizations that can answer these five questions have a better-than-average chance of protecting the privacy of their data. For enterprise software companies whose approach to development hasn’t centered privacy on identities, these five questions need to guide their daily improvement of their SDLC cycles to integrate privacy engineering into their processes of developing and releasing software.

VentureBeat’s mission is to be a digital town square for technical decision-makers to gain knowledge about transformative enterprise technology and transact. Discover our Briefings.

[ad_2]

Source link