Snapchat adds new teen safety features, cracks down on age-inappropriate content | TechCrunch

3 min read

[ad_1]

Snapchat today is announcing a series of new safeguards for its app, aimed at better protecting teen users, similar to other efforts introduced earlier by other social apps, like Facebook and Instagram. The company says the new features will make it harder for strangers to contact teens, provide a more age-appropriate experience, crack down on accounts marketing inappropriate content and improve education for teens using its app. The company will also introduce more resources for parents and families, including a new website and YouTube explainer series.

The news comes nearly two years after Snap was hauled before Congress to defend its app’s 13+ age rating on the App Store given its content, which some U.S. senators believed was inappropriate for younger users — including sexualized material and ads, articles about alcohol and pornography, and more. Since then, the company has also been in hot water over its use as a platform of choice for drug dealers, which led to multiple teen deaths, requiring Snap to make a number of operational changes to protect users. And earlier this year, law enforcement reports indicated that child luring and exploitation were on the rise in the app.

Today’s new safeguards take aim at some of these concerns by introducing features aimed at better protecting 13- to 17-year-olds from online risks.

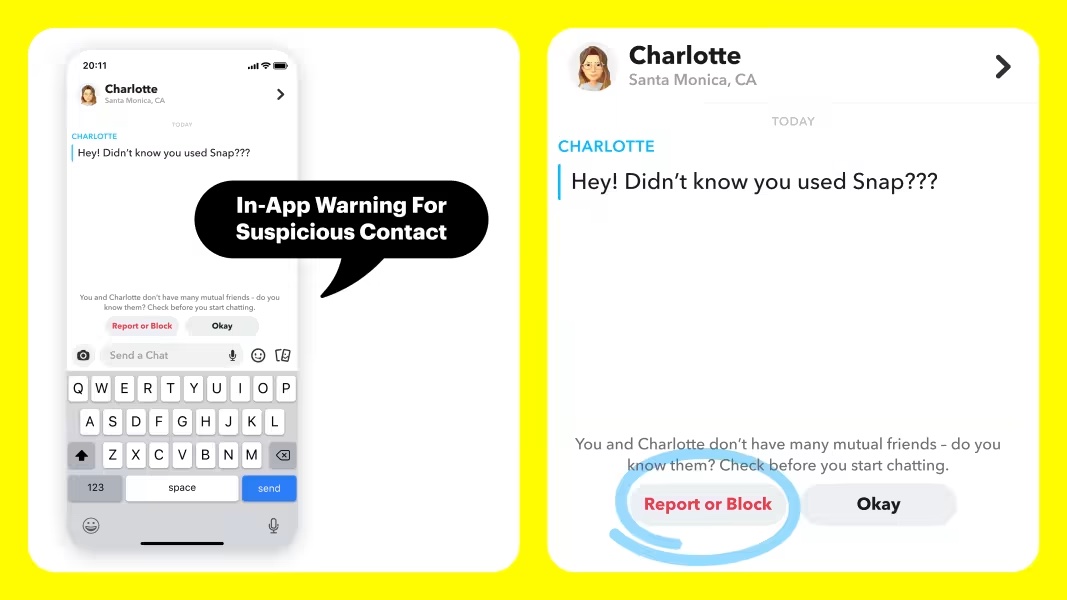

For starters, Snapchat will now display in-app warnings when a minor adds a friend on the app when they don’t already share mutual friends or the person isn’t in their contacts. This message is meant to help the teen more carefully consider if they want to be in contact with this person.

Image Credits: Snap

In addition, Snap will raise the bar on when minors appear in search results. Today, Snap already requires a 13- to 17-year-old to have several mutual friends in common with another user before they can show up in search results or as a friend suggestion, it says. But now it will require a greater number of friends in common, based on the number of friends the user has, making it even tougher for teens to connect with people they don’t know.

Similarly, Instagram has launched multiple features to limit teen interaction with unknown adults in previous years, including hiding minor accounts from search and discovery.

Also new today, Snap is implementing a three-part strike system across Stories and Spotlight, where users can find public content that reaches a large audience. Under the new system, Snap says it will immediately remove inappropriate content that it proactively detects or that gets reported to the company. If an account tries to circumvent Snap’s rules, it will also be banned.

Across Stories and Search features, Snapchat will now highlight resources, including hotlines for help, if young people encounter sexual risks, like catfishing, financial sextortion, taking and sharing of explicit images, and more.

Plus, the company noted it will continue to ban accounts of users who try to commit “severe harms,” like threats to another user’s physical or emotional well-being, sexual exploitation and the sale of illicit drugs.

Snap says the new features will be informed by feedback from The National Center on Sexual Exploitation (NCOSE), while its new in-app educational resources were developed with The National Center for Missing and Exploited Children (NCMEC).

For parents, meanwhile, Snap is launching an online resource at parents.snapchat.com as well as a new YouTube explainer series to help get families up to speed on how Snapchat works and how to use the app’s parental controls.

Despite Snap’s proclamations, enforcement of its new rules and policies will be key.

The company has a history of announcing safety features, then not immediately acting on the new rules. For example, when Snap announced new guidelines around anonymous messaging and friend-finding apps in an effort to better protect younger users, TechCrunch discovered a small handful of popular apps had not yet complied with the guidelines and were still allowed to operate.

Snap also sometimes rushes to launch features without adequate protections. Most recently, after Snap announced its new AI feature, My AI, it was immediately discovered to respond inappropriately to minors’ messages, leading Snap to belatedly announce safeguards and parental controls.

The company says it will build on the new features announced today in the “coming months” with additional protections for teens and parents.

[ad_2]

Source link