Generative AI could undermine elections in US and India, study finds

1 min read

[ad_1]

AI image generators could undermine upcoming elections in the world’s biggest democracies, according to new research

Logically, a British fact-checking startup, investigated AI’s capacity to produce fake images about elections in India, the US, and the UK. Each of these countries will soon go to the ballot box.

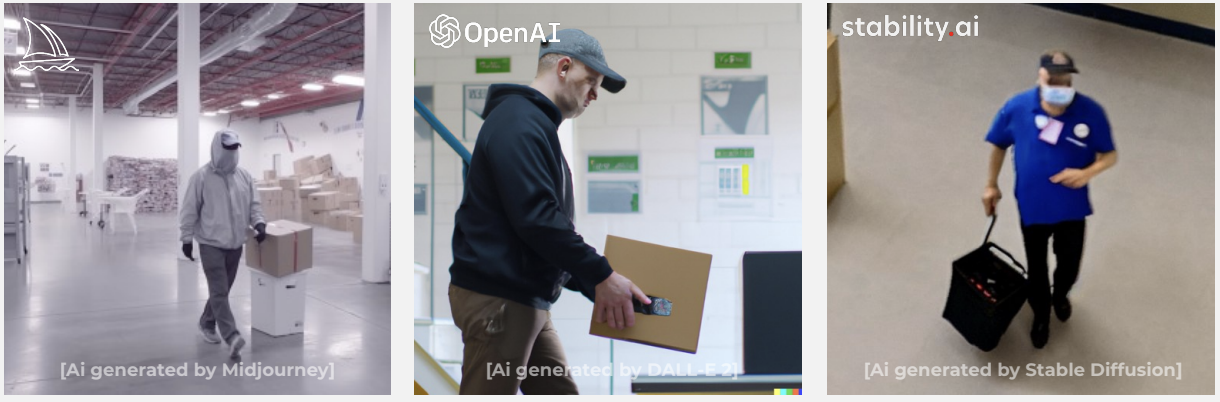

The company tested three popular generative AI systems: Midjourney, DALL-E 2, and Stable Diffusion. All of them have content moderation of some form, but the parameters are unclear.

Logically explored how these platforms could support disinformation campaigns. This included testing narratives around a “stolen election” in the US, migrants “flooding” into the UK, and parties hacking voting machines in India.

Across the three systems, more than 85% of the prompts were accepted. The research found that Midjourney had the strongest content moderation and produced the highest-quality images. DALL-E 2 and Stable Diffusion had more limited moderation and generated inferior images.

Of 22 US election narratives tested, 91% were accepted by all three platforms on the first prompt attempt. Midjourney and DALL-E 2 rejected prompts attempting to create images of George Soros, Nancy Pelosi, and a new pandemic announcement. Stable Diffusion accepted all the prompts.

Most of the images were far from photo-realistic. But Logically says even crude pictures can be used in malicious capacities.

Logically has called for further content moderation on the platforms. It also wants social media companies to be more proactive in tackling AI-generated disinformation. Finally, the company recommends developing tools that identify malicious and coordinated behaviour.

Cynics may note that Logically could benefit from these measures. The startup has previously conducted fact-checking for the UK government, US federal agencies, the Indian electoral commission, Facebook, and TikTok. Nonetheless, the research shows generative AI could amplify false election narratives.

[ad_2]

Source link