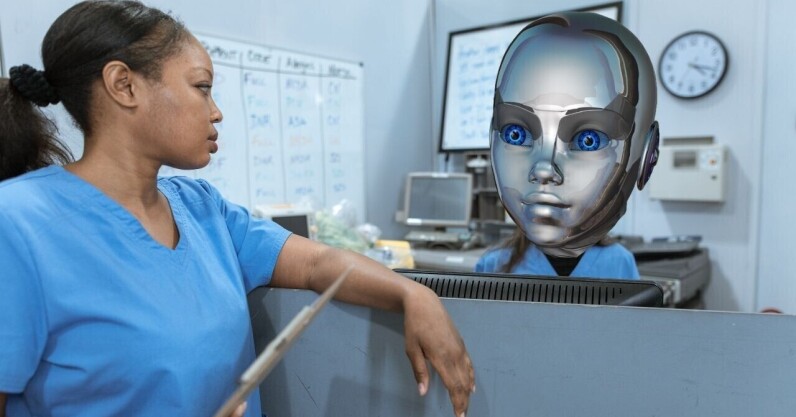

AI in healthcare could exacerbate ethnic and income inequalities, scientists warn

2 min read

[ad_1]

Scientists fear using AI models such as ChatGPT in healthcare will exacerbate inequalities.

The epidemiologists, from the universities of Cambridge and Leicester, warn that large language models (LLMs) could entrench inequities for ethnic minorities and lower-income countries.

Their concern stems from systemic data biases. AI models used in healthcare are trained on information from websites and scientific literature. But evidence shows that ethnicity data is often missing from these sources.

As a result, AI tools can be less accurate for underrepresented groups. This can lead to ineffective drug recommendations or racist medical advice.

“It is widely accepted that a differential risk is associated with being from an ethnic minority background across many disease groups,” the researchers said in their study paper.

“If the published literature already contains biases and less precision, it is logical that future AI models will maintain and further exacerbate them.”

The scientists are also concerned about the threat to low- and middle-income countries (LMICs). AI models are primarily developed in wealthier nations, which also dominate funding for medical research.

Consequently, LMICs are “vastly underrepresented” in healthcare training data. This can lead AI tools to provide bad advice to people in these countries.

Despite these qualms, the researchers recognise the benefits that AI can bring to medicine. To mitigate the risks, they suggest several measures.

First, they want the models to clearly describe the data used in their development. They also call for further work to address health inequalities in research, including better recruitment and recording of ethnicity information.

Training data should be adequately representative, while more research is needed on the use of AI for marginalised groups. These interventions, say the researchers, will promote fair and inclusive healthcare.

“We must exercise caution, acknowledging we cannot and should not stem the flow of progress,” said Dr Mohammad Ali from Leicester University.

[ad_2]

Source link